Your team recently switched to spot instances.

The provider’s billing console showed discounts of 60% to 70%, but the monthly bill reflected more modest savings. Prices changed over time, and when the FinOps lead asked why, there was no clear explanation. The answer lies in how spot pricing works.

Spot instances have been one of the cloud's cost levers for over a decade, yet actual savings often fall short of what providers advertise.

Two models govern spot pricing: provider-controlled pricing, the default on most major platforms, and open-market auction pricing, in which buyers participate in price formation. Any provider can fall into either category, and this article examines both to show which one is shaping your bill.

TL;DR

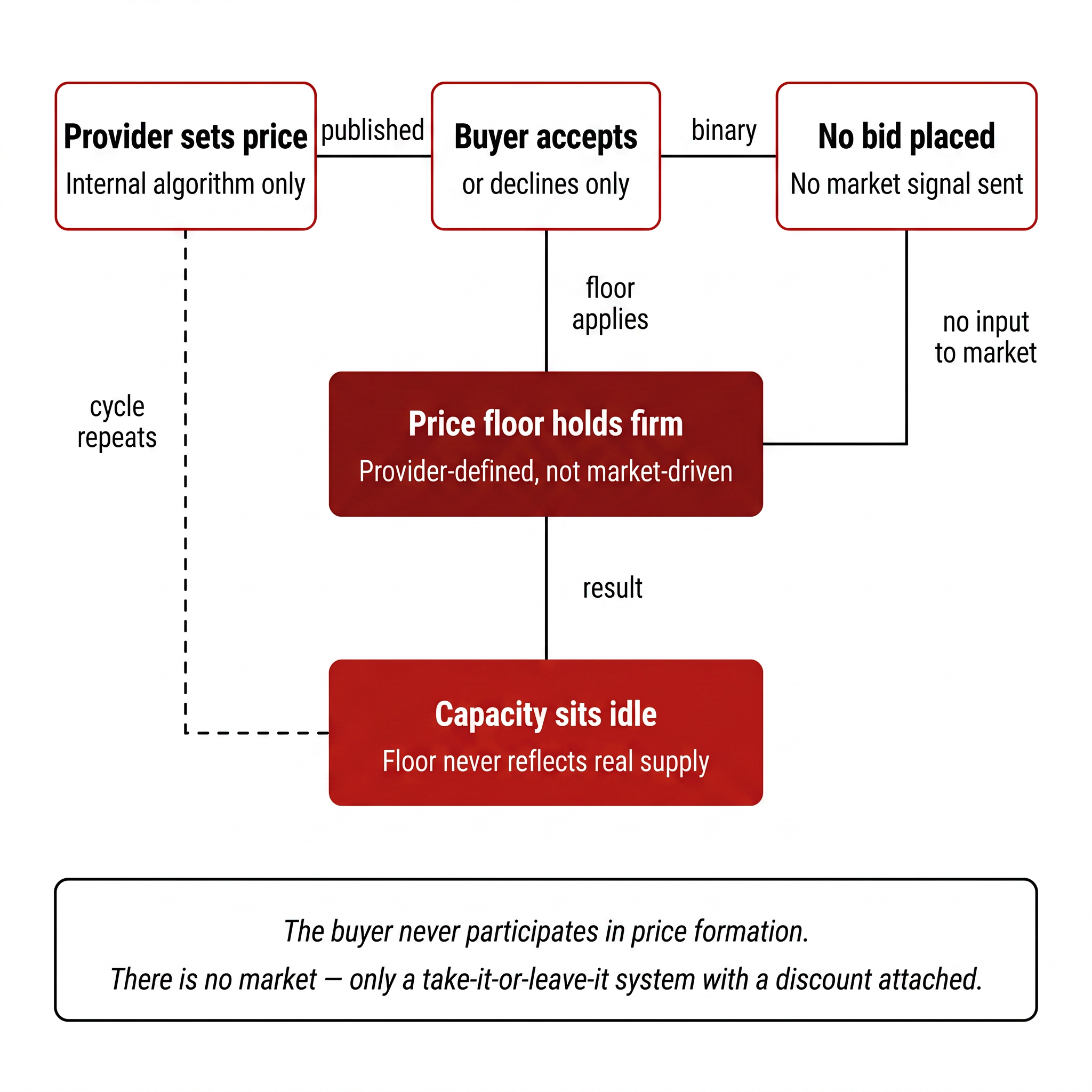

Most cloud providers set spot prices internally. Amazon Web Services (AWS), Google Cloud, and Azure all use opaque systems to determine what buyers pay. The buyer's only choice is to accept the price or not use the capacity. In practice, it’s more like a provider-defined discount than a live market.

This model limits how much pricing can move in your favor. Prices move based on signals buyers cannot see, and minimum pricing thresholds remain in place regardless of how much spare capacity exists. Savings level off over time, no matter how the infrastructure is tuned.

A true open market auction works differently. When buyers submit bids, supply meets demand, and the final price emerges from competition between participants, not from internal pricing logic. AWS ran this model from 2009 to 2017, and once it was removed, average spot prices moved higher.

Rackspace Spot revived the auction model in 2024. Since then, bid volume has grown 100x, with 96.8% of active bidders now running entirely on spot with no on-demand fallback. Eviction rates dropped from roughly 5% in early 2024 to under 0.1% by Q1 2026, and across hundreds of thousands of provisioned instances, only 0.68% were ever evicted.

Teams using the auction model consistently report savings that go two to three times deeper than what provider-controlled spot delivers. One documented case ran workloads at roughly 1% of equivalent provider cost, reflecting how differently the pricing model behaves under real market competition.

How provider-controlled spot pricing works and where it falls short

Provider-controlled spot pricing means the cloud provider sets the price, adjusts it, and defines the minimum level it will not go below. The buyer has one choice: accept or walk away. There is no bidding, no market signal, and no way for buyers to influence what they pay.

This model takes three common forms across the industry:

Smoothed variable pricing adjusts prices gradually based on internal capacity signals. AWS spot pricing works this way today. Prices shift based on long-term supply trends, not live demand, and when they change, buyers have no visible trigger to anticipate.

Fixed discount pricing offers a set percentage off on-demand rates. Google Cloud Spot VMs advertise discounts up to 91%. The discount looks attractive, but the provider defines the range. Buyers cannot push prices lower, even when large amounts of capacity sit idle in a region.

Capped variable pricing lets buyers set a maximum price threshold, but pricing logic stays entirely within the provider's control. Azure Spot VMs operate this way. The threshold only determines when the instance is terminated, not the rate the buyer pays before that point.

All three forms share the same weaknesses:

Price opacity: Buyers can see prices but have no visibility into why they change.

Capped Pricing: Minimum prices hold even when regional capacity is underused, so the real cost of idle compute never reaches the buyer.

Unpredictable interruptions: Evictions are given short notice, typically 30 seconds to 2 minutes, depending on the platform. Even workloads that are architected correctly get interrupted because the trigger is an internal capacity decision, not a visible price signal.

The diagram below captures the core dynamic:

The next question is what a true open market auction looks like and how it changes outcomes for buyers.

What a true open market auction actually is

Most teams assume provider-controlled spot pricing is already purely market-driven. It is not, and part of the confusion stems from the language providers still use. AWS retired its bidding model in 2017 but still references bid price terminology in its documentation, leaving many teams with the impression they are participating in a live market when they are not. The difference becomes apparent the moment you see how a real auction actually works.

How price formation works in a real market

A true open-market auction is a pricing mechanism where the final price emerges from competition between buyers. Supply meets demand directly, and the clearing price reflects what participants are actually willing to pay.

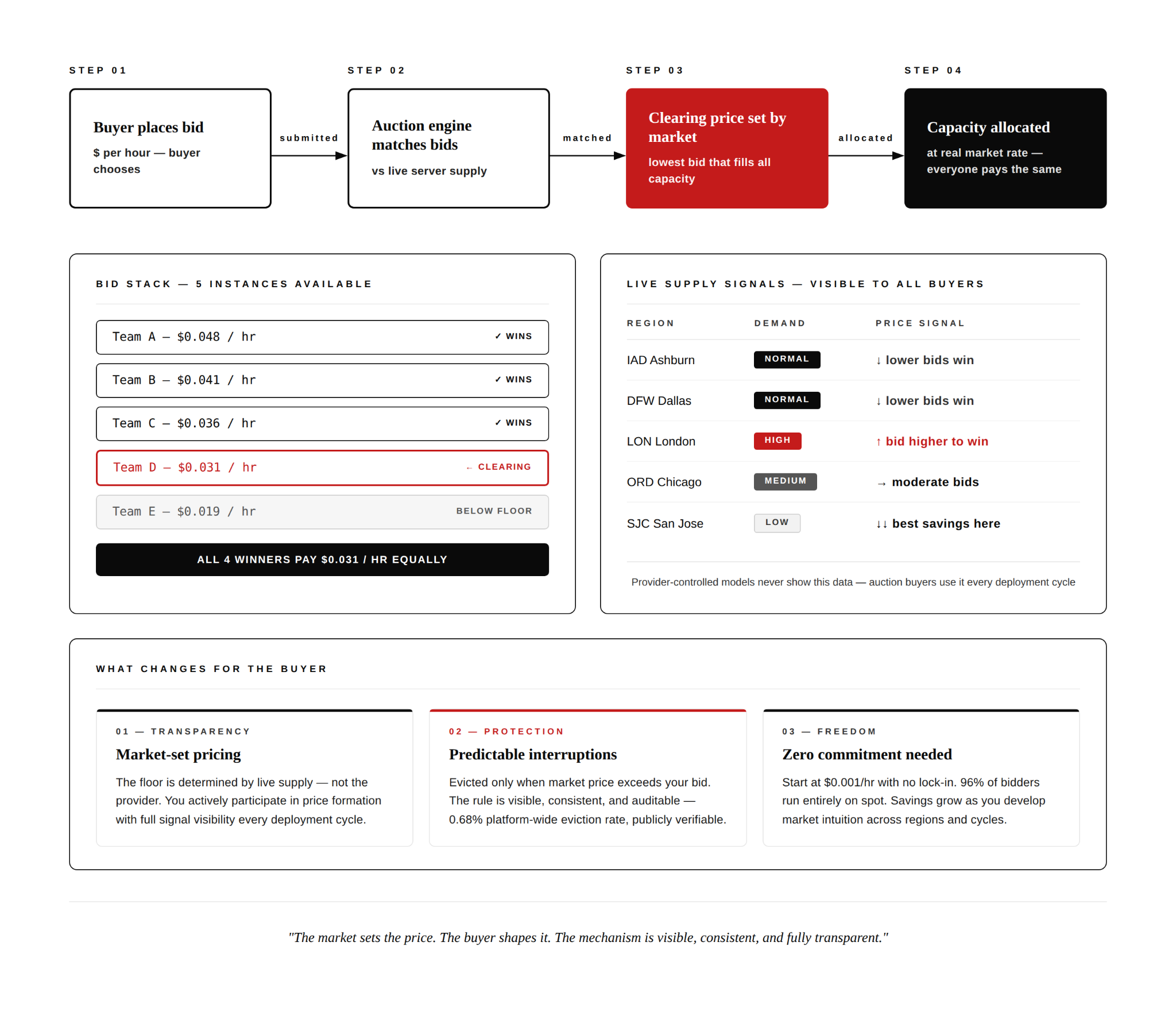

Here is how it works in practice:

- Buyers submit bids stating the maximum price they are willing to pay per hour for compute capacity.

- An auction engine matches bids against available server supply in real time.

- The clearing price, defined as the lowest successful bid that fills all available capacity, becomes the price every winner pays, regardless of their individual bid amount.

- When supply exceeds demand, prices fall. When demand increases, prices rise. Movements are transparent and tied to market activity.

A simple example makes this clearer. If five instances are available and seven teams place bids, the system ranks all bids from highest to lowest and assigns capacity to the top five. The fifth-highest bid becomes the clearing price. All five winners pay that same rate, while the remaining two receive nothing.

The diagram below shows how bids are ranked, capacity is assigned, and the clearing price is set.

This is the same uniform-price auction model used in US Treasury bill auctions and wholesale electricity markets. It is a well-established mechanism designed for transparent price discovery.

Under this model, Interruptions follow a clear rule: an instance is preempted when the market price rises above the buyer’s bid. Teams can see what drove a price movement, adjust their next bid accordingly, and build a bidding strategy that improves over time. This reflects how spot pricing was originally designed.

The history behind cloud spot pricing

The auction model for cloud compute dates back to 2009, when AWS launched the first large-scale public cloud spot auction. By 2017, AWS replaced the system with provider-managed price smoothing. The shift removed buyers' access to the low-demand pricing conditions the auction had made possible, and average prices increased over time. Some would even say the auction model became AWS's own biggest competitor, given how cheap compute could get and how much cost savings buyers were able to maximize.

Neither GCP nor Azure ever ran a true auction, launching spot pricing on provider-managed variable models from day one.

Rackspace Spot reintroduced the auction model with full price transparency, no hidden reserve prices, and a minimum base price of $0.001 per hour. The platform is not recreating the AWS auction era. It is building a fully transparent mechanism that earlier implementations claimed to offer but never actually delivered. For more detail, see the history of spot instances.

Today, dozens of active auctions run simultaneously across 7 global regions, with hundreds of organizations running production workloads including memory-optimized and GPU compute through the same open market mechanism. Those differences become clearer when you examine the mechanics behind each pricing model and how they shape actual spot costs.

The mechanics behind each pricing model and how they shape your actual spot costs

Two pricing architectures exist in cloud spot instances today, and they produce very different cost outcomes.

The table below maps the underlying structure to help clarify which model a team operates on and how that shows up in actual cost patterns.

Buyer influence on price is the sharpest structural difference. In an auction model, buyers are price-takers whose bids determine the clearing price. A team running overnight ML training jobs on a provider-controlled platform pays the published rate regardless of how much idle capacity exists in a region. On an auction model, that same team submits a bid that competes against real supply conditions.

Interruption logic determines whether spot instances can support workloads beyond simple batch jobs. Rackspace Spot platform data shows how that the average spot instance runs for several days without interruption. Memory-optimized instances, the category most associated with databases and stateful workloads, sustain even longer continuous runtimes on average.

The savings ceiling is where market-based pricing changes how low compute costs can go during periods of unused capacity. In an auction model, pricing responds directly to live demand, giving buyers more opportunity to capture real cost reductions. A Rackspace Spot user documented exactly this in practice, sharing how they ran a full data services stack including PostgreSQL, Redis, and Elasticsearch entirely on spot, achieving an 85 to 90% reduction in total infrastructure costs compared to hyperscaler equivalents under the same workload conditions.

Cost of identical compute specs across pricing models

The tables below use the same compute specification, 4 vCPU and 16GB RAM, compared across on-demand, provider-controlled spot, and open market auction pricing.

This sets the context for how the auction model reduces Kubernetes compute costs in practice.

How the auction model reduces Kubernetes compute costs in practice

Running Kubernetes is expensive. Aside from compute, you are paying for egress, storage, control-plane costs, and operational overhead. Managed Kubernetes services cost three times as much as running equivalent virtual machines before any of that is factored in.

In addition to this, studies show up to 80% of CPU resources in Kubernetes environments sit idle, yet teams still absorb full control-plane and node costs. Even when spot nodes are used, the provider's pricing ceiling means the savings on that idle capacity are never fully realized.

The auction model changes this by letting the market determine compute costs, with node pricing starting from $0.001 and clearing at whatever price real supply and demand settles on. With Rackspace Spot's managed Kubernetes, you can select spot instances as your cluster nodes, putting the auction model to work directly on your compute costs.

Look at how one of our customers, OpsMx, a DevSecOps platform provider, ran development, test, and demo workloads on a major provider's spot offering and found costs only marginally lower than savings plans. After moving to an auction-based model, the company reported an 83% reduction in infrastructure costs.

The auction model does not guarantee the lowest possible price at every moment. During high-demand periods, costs can increase. What stays consistent is visibility. Pricing movements are transparent to buyers, enabling teams to adjust their bids and deployment behavior in line with real market conditions. The operational question here is how teams apply those pricing signals. The next section examines what changes when teams move to auction-based pricing.

What changes when your team switches to auction pricing

Workload placement changes. Teams that previously avoided spot for stateful workloads find they can run them confidently on Rackspace Spot because of how stable the platform is. With eviction rates under 0.1%, the interruption risk that made stateful workloads impractical on spot is no longer the barrier it once was. The State of Spot 2026 report reflects this directly: 51% of cloudspaces on the platform run stateful applications, and 64% of workloads are stateless deployments with 20% being batch or ML workloads.

Cost behavior changes in a way that compounds. For teams where compute is among the largest infrastructure expenses, even small pricing differences add up over billing cycles. Auction pricing removes the discount ceiling that provider-controlled models impose, meaning cost reductions can go deeper during periods of low demand rather than leveling off at whatever floor the provider has set.

Teams switching to auction pricing also don't have to give up the breadth of compute options they had with other providers. Rackspace Spot offers compute options spanning general purpose, compute-optimized, memory-optimized, and GPU-accelerated workloads across 7 global regions.

For teams where pricing transparency, workload placement, and compute variety all matter, the auction model addresses each directly. What follows is a practical path for teams making that transition.

How to start moving toward the auction model

Teams can evaluate whether the auction model fits their workloads and cost structure without committing to a full infrastructure migration.

The steps below allow teams to test the model incrementally.

Start with a workload audit

A fault-tolerant workload continues operating even when individual components are interrupted. Batch processing pipelines that restart from checkpoints, CI/CD runners that automatically retry failed jobs, and ML training jobs that resume from saved states are common candidates for auction-based pricing.

If a team is already running spot instances on a provider-controlled platform, reviewing the last 90 days of spend is a useful baseline. Compare the effective discount rate against on-demand pricing, count every interruption that occurred, and note whether each was explainable from the provider console or documentation.

If the interruptions cannot be explained from available provider data, teams are left without a clear view into the pricing conditions behind them.

Run a parallel cost test before committing

Deploy the same workload on the current platform and on an auction-based model simultaneously for two to four weeks. Compare actual cost, interruption frequency, and the effort required to manage each environment.

Evaluate managed Kubernetes as your delivery layer

One reason teams delay moving spot workloads is the operational burden of managing the underlying infrastructure. Control-plane maintenance, node preemption handling, and cluster upgrades can reduce the savings gained from lower compute pricing.

Rackspace Spot delivers the auction model through fully managed Kubernetes clusters. The platform handles those operational concerns directly, and preemption alerts are delivered via Slack webhooks. The parallel test described above provides teams with enough signal to evaluate both pricing behavior and the operational model before making longer-term infrastructure decisions.

The pricing model is the strategy

Spot pricing was originally designed to match idle compute capacity with buyers willing to pay market rates for it. AWS moved away from this model over time, replacing live market mechanisms with managed pricing systems designed around pricing stability and revenue predictability.

For teams managing infrastructure costs at scale, the more important question is how much visibility and influence buyers actually have over pricing behavior, not simply which provider advertises the largest discount.

Rackspace Spot is currently the only platform operating a live open-market auction for cloud compute. Teams can view current auction pricing, compare it directly against existing cloud costs, and explore the model without long-term contracts or upfront commitment.

Get started at spot.rackspace.com